Introduction and motivation

Huge growth of mobile data traffic, inevitably, requires a significant increase in wireless network capacity. The European Telecommunications Standards Institute (ETSI) has envisioned the fifth-generation (5G) cellular system, with the aim of developing a commercial system by 2020. Excessive numbers of mobile users along with associated data traffic generated by mobile devices (smart phones, laptops, and tablets) are not matched with the improvement pace of battery life and CPUs deployed in mobile handsets. Mobile battery life can be prolonged by means of computation offloading whenever a compute intensive task is not efficiently affordable by local computing resources.

In addition to exponential growth of data traffic in cellular networks, mobile operators are facing a serious issue that is likely being underrated. Mobile operators’ traditional primary revenue sources (e.g., voice and messaging) continue to gradually decline due to wide penetration of over-the-top (OTT) services such as Skype, Tango, Line, FaceTime, Whatsapp, iMessege, among others. It is crucial for mobile operators to not only rely on traditional revenue sources, but to envision and realize a new ecosystem to generate unique revenue and value by transforming base stations into intelligent service hubs that are capable of providing compelling services directly from the very edge of the network by means of mobile-edge computing (MEC) [1].

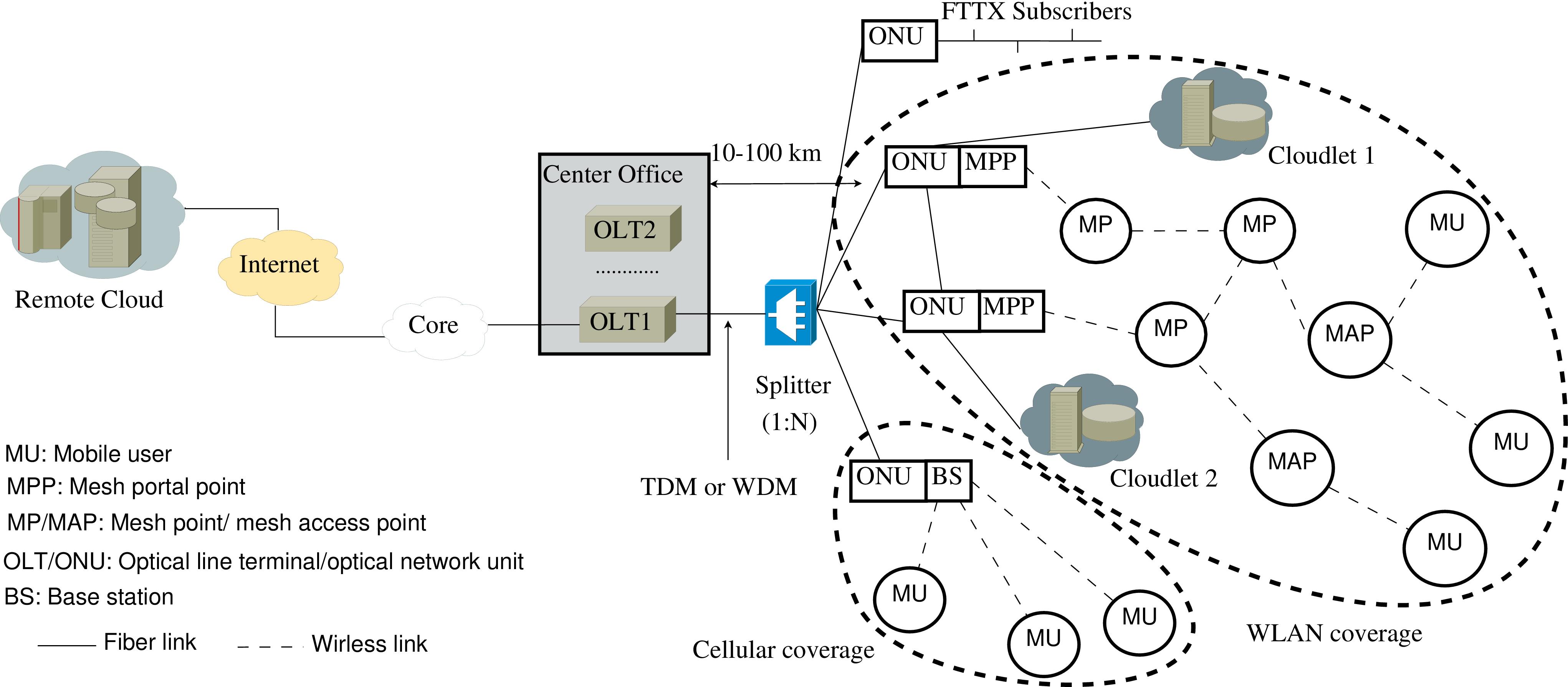

Cloudlets are located at the edge of the Internet, just one wireless hop away from associated mobile devices, enabling new applications that are both compute intensive and latency sensitive [2,3]. The resultant two-level cloud-cloudlet architecture leverages both centralized and distributed cloud resources and services, where an infrastructure based on WiFi access technology is deployed [4]. Cloudlets are owned and managed by mobile end-users, while MEC servers are operated by mobile providers. Mobile subscribers access cloudlets via WiFi (see Fig 1). Since cloudlets are not connected to the mobile network, they do not share network operator related knowledge. Thus, cloudlets are suitable for offloading resource-intense tasks from mobile subscribers in order to prolong battery life.

To maintain the consolidation achievable in traditional clouds and ensure a smooth evolutionary migration path from current 4G LTE-A HetNets relying on important performance-enhancing features such as coordinated multipoint (CoMP) transmission and reception among base stations (BSs), we allow a macrocell BS or a small cell (i.e., micro-, pico-, or femtocell) BS to be connected to the cloud through a conventional cloud radio access network (C-RAN) that is based on a reconfigurable radio-over-fiber (RoF) backhaul between the central baseband units (BBUs) and remote radio heads (RRHs). Conversely, the complementary Ethernet-based FiWi access network is realized via a distributed RAN (D-RAN) based on so-called radio-and-fiber (R&F) technologies that rely on EPON/WLAN medium access control (MAC) protocol translation at the optical-wireless interface [5]. Traditionally, Common Public Radio Interface (CPRI) was used as the transmission technology in the front-haul. However, if existing fiber network infrastructures are to be used, compatibility with Ethernet based technologies is inevitable. Ethernet is a promising front-haul solution due to its maturity and adaptation with widely deployed wireless/wired access networks [6]. The operation, administration, and maintenance (OAM) capabilities of Ethernet provide a standardized means of management, resilience, and performance monitoring. Moreover, the use of Ethernet as the underlying transport technology in the front-haul may offer the following advantages:

- Use of low-cost industry-standard network equipment

- Ability to share network equipment with fixed access networks and enabling greater convergence and cost reduction

- Use of switches/routers to enable statistical multiplexing gains

- Monitoring through compatible hardware probes

Fig. 1. Cloud-cloudlet empowered FiWi enhanced LTE-A HetNet architecture [4].

Research problems

Internet of Things (IoT) technologies comprising wearable and low processing power devices suffer from serious limitations to locally perform traditional compute-intensive applications such as augmented reality and surveillance systems. The issue can be resolved if latency-sensitive applications are offloaded to rich servers co-located with base stations. Therefore, offloading tasks to edge servers will significantly reduce latency. Computational offloading in MEC deals with several challenges such as: How to split an IoT task? How to decide a task to offload or not? Which server to offload to? When to offload? Given that applications involving computation offloading are typically more delay-sensitive than those requiring simple data offloading, developing low-latency offloading strategies is of high importance. These strategies execute delay-sensitive tasks in local MEC servers or remote clouds.

FiWi enhanced mobile networks with MEC servers are able to provide seamless service to mobile subscribers. Since mobile subscribers move services have to migrate among MEC servers. Because different servers are attached to different base stations, a decision needs to be made on whether and where to migrate the service when a user moves outside the service area of a server that is providing the service.

Artificial intelligence (AI) already has enough power to surpass people in tasks, which were considered typically for humans. However, it will be key to keep in mind that computers should be complements of humans, not substitutes. Unlike AI capabilities designed primarily to take humans out of the loop, many innovative and unforeseen applications require people and robots to collaboratively work together in close interaction with each other. Towards this end, advanced MEC capabilities of future mobile networks empowered with AI will pave the road towards novel services, applications, and new revenue sources.

Research directions

The research directions related to AI based MEC in FiWi enhanced networks include but are not limited to the following challenges:

Service Migration

One of the key design issues in integrated MEC servers and FiWi networks is service migration. Service migration deals with the situation where the location of a subscriber being served by an MEC server changes and hence a decision has to be made whether to migrate the service to an alternate MEC server. More specifically, we will investigate by means of probabilistic analysis and verifying simulation the impact of different dynamic computation allocation schemes of sub-tasks and various key design parameters such as cloud availability, cloudlet size, cloudlet connection likelihood, and user mobility on the computing capacity and speed as well as delay performance of FiWi enhanced networks.

TensorFlow enabled “Cooperative” Automation

Most of the research related to machine learning has focused mainly on developing automated capabilities for computers to substitute humans in many tasks. However, it is important to note that humans and machines are categorically different. Moreover, the most intelligent machines are not and in the near term will not surpass humans in some specific tasks, hence interest has grown in the topic of “cooperative” automation, where AI empower humans to realize new capabilities and services which never used to exist. Towards this vision, early researches have focused on the interaction of people and machines to keep human in the loop and leverage human participation effectively.

TensorFlow is Google Brain’s second generation machine learning open source library software released on November 9, 2015 [7]. TensorFlow provides an open source machine learning library which has been used in hundreds of Google products. TensorFlow computations are presented as stateful dataflow graphs. This library of algorithms stems from the need to instruct neural networks, learn, and act similarly humans do and design novel applications to highlight the role of AI in empowering MEC servers.

Fig. 2. TensorFlow [7].

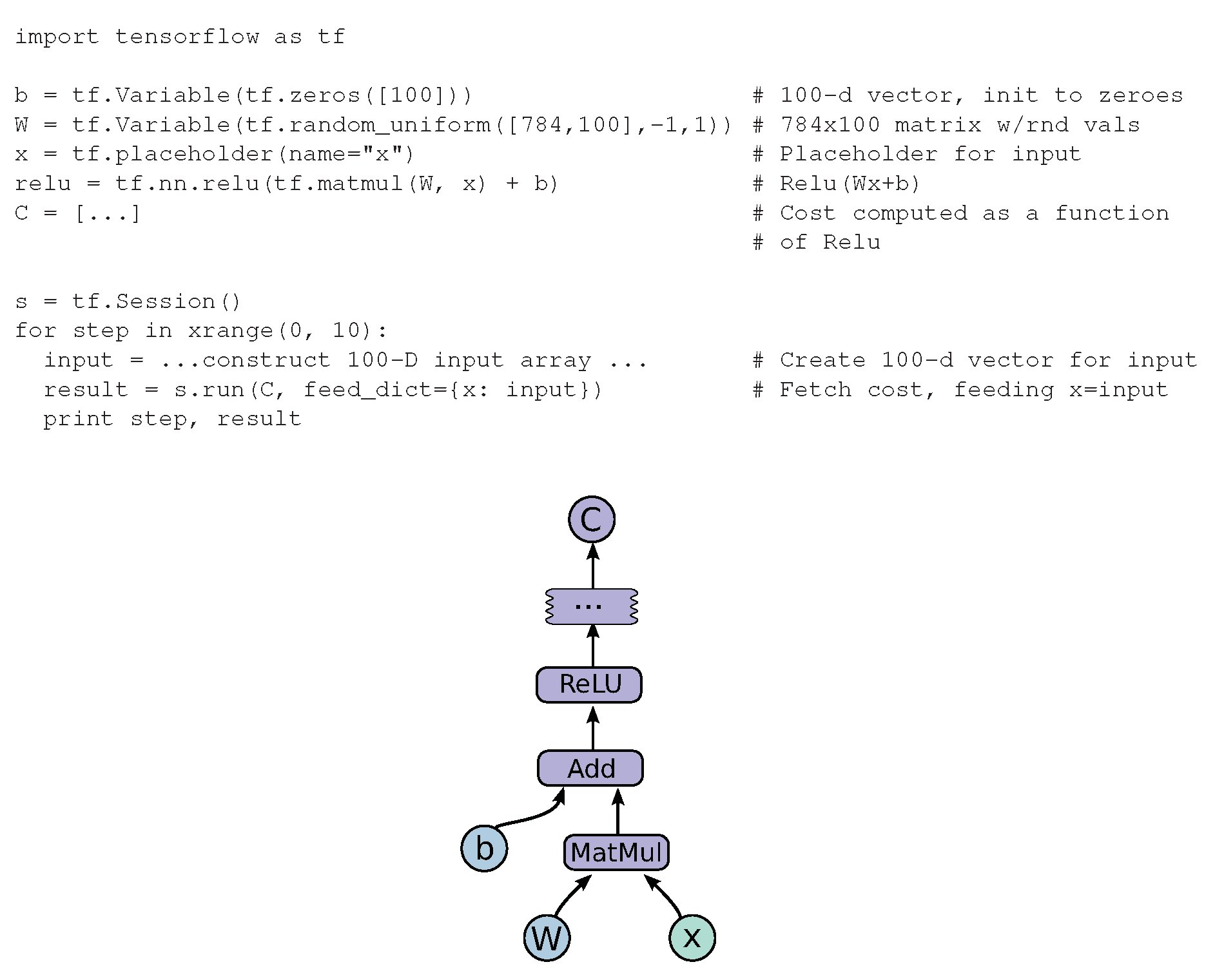

In TensorFlow, data flowgraphs are used for numerical computations. Nodes in the graphs represent mathematical operations, while the edges are multidimensional data arrays, which are also known as tensors. Tensors are communicated between vertices. TensorFlow provides flexibility and portability. In addition, it allows researchers to push their innovative ideas to products. TensorFlow comes with Python and C++ interfaces (as frontend languages for clients) to build and perform computational graphs. An example fragment to build and execute a TensorFlow graph using Python and the resulting computation graph is depicted in Fig. 3.

Fig. 3. Example of TensorFlow code fragment followed by corresponding computation graph [7].

Edge Content Delivery

MEC servers provide a platform, where additional content delivery services can be developed at the network edge. Content traditionally hosted by Internet services/CDNs has moved towards the network edge. MEC servers operate as local content delivery nodes and serve cached content, thus resulting in reduced traffic load and latency in the core.

Content Scaling

Instead of routing all mobile subscribers’ data separately to remote clouds, MEC servers are capable of aggregating related traffic and thereby reduce core network traffic loads. Moreover, MEC enables the downscaling of user-generated traffic before it is transmitted to the core. Additionally, they enable real-time scaling of Internet content if any congestion occurs at base station sites.

Researchers

Advisor

Graduate Student

Publications

- A. Ebrahimzadeh and M. Maier,

“Fiber-Wireless (FiWi)-Enhanced Mobile Networks in the 6G Era,”

Hoboken, NJ: Wiley, “Wiley Encyclopedia of Electrical and Electronics Engineering,” J. Webster (Editor), pp. 1-8, Feb. 2022

- M. Maier, A. Ebrahimzadeh, A. Beniiche, and S. Rostami,

“The Art of 6G (TAO 6G): how to wire Society 5.0 (Invited Paper),”

IEEE/OSA Journal of Optical Communications and Networking, OFC 2021 Special Issue, vol. 14, no. 2, pp. A101-A112, Feb. 2022

- F. Boabang, A. Ebrahimzadeh, R. H. Glitho, H. Elbiaze, M. Maier and F. Belqasmi,

“A Machine Learning Framework for Handling Delayed/Lost Packets in Tactile Internet Remote Robotic Surgery,”

IEEE Transactions on Network and Service Management, vol. 18, no. 4, pp. 4829-4845, Dec. 2021

- J. A. Hernández, A. Ebrahimzadeh, M. Maier, and D. Larrabeiti,

“Learning EPON Delay Models From Data: A Machine Learning Approach,”

IEEE/OSA Journal of Optical Communications and Networking, vol. 13, no. 12, pp. 322-330, Dec. 2021

- A. Ebrahimzadeh, M. Maier, and R. H. Glitho,

“Trace-Driven Haptic Traffic Characterization for Tactile Internet Performance Evaluation,”

Proc., International Conference on Engineering and Emerging Technologies (ICEET), Istanbul, Turkey, pp. 1-6, Oct. 2021

- A. Beniiche, A. Ebrahimzadeh, and M. Maier,

“The Way of The DAO: Toward Decentralizing the Tactile Internet,”

IEEE Network, vol. 35, no. 4, pp. 190-197, July/Aug. 2021

- M. Maier, A. Ebrahimzadeh, S. Rostami, and A. Beniiche,

“The Internet of No Things: Making the Internet Disappear and "See the Invisible",”

IEEE Communications Magazine, vol. 58, no. 11, pp. 76-82, Nov. 2020

- G. Otero Pérez, A. Ebrahimzadeh, M. Maier, J. A. Hernández, D. Larrabeiti López, and M. F. Veiga,

“ecentralized Coordination of Converged Tactile Internet and MEC Services in H-CRAN Fiber Wireless Networks,”

IEEE/OSA Journal of Lightwave Technology, vol. 38, no. 18, pp. 4935-4947, Sept. 2020

- A. Ebrahimzadeh and M. Maier,

“Human-in-the-loop models for multi-access edge computing,”

IET Press, "Edge Computing: Models, Technologies and Applications", pp. 147-168, Aug. 2020

- A. Beniiche, A. Ebrahimzadeh, and M. Maier,

“From blockchain Internet of Things (B-IoT) towards decentralising the Tactile Internet,”

Boca Raton, FL: CRC Press, "Blockchain-enabled Fog and Edge Computing: Concepts, Architectures and Applications," pp. 3-30, July 2020

- A. Ebrahimzadeh and M. Maier,

“Cooperative Computation Offloading in FiWi Enhanced 4G HetNets Using Self-Organizing MEC,”

IEEE Transactions on Wireless Communications, vol. 19, no. 7, pp. 4480-4493, July 2020

- A. Ebrahimzadeh and M. Maier,

“Delay-Constrained Teleoperation Task Scheduling and Assignment for Human+Machine Hybrid Activities Over FiWi Enhanced Networks,”

IEEE Transactions on Network and Service Management, vol. 16, no. 4, pp. 1840-1854, Dec. 2019

- A. Ebrahimzadeh and M. Maier,

“Distributed Cooperative Computation Offloading in Multi-Access Edge Computing Fiber-Wireless Networks (Invited Paper)”

Optics Communications, Special Issue on Photonics for 5G Mobile Networks and Beyond, vol. 452, pp. 130-139, Dec. 2019

- A. Ebrahimzadeh, M. Chowdhury, and M. Maier,

“Human-Agent-Robot Task Coordination in FiWi-based Tactile Internet Infrastructures Using Context- and Self-Awareness,”

IEEE Transactions on Network and Service Management, vol. 16, no. 3, pp. 1127-1142, Sept. 2019

- A. Ebrahimzadeh and M. Maier,

“Next Generation Multi-Access Edge-Computing Fiber-Wireless-Enhanced HetNets for Low-Latency Immersive Applications,”

Hershey, PA: IGI Global, "Design, Implementation, and Analysis of Next Generation Optical Networks: Emerging Research and Opportunities," pp. 40-68, July 2019

- M. Maier and A. Ebrahimzadeh,

“Towards Immersive Tactile Internet Experiences: Low-Latency FiWi Enhanced Mobile Networks with Edge Intelligence (Invited Paper),”

IEEE/OSA Journal of Optical Communications and Networking, Special Issue on Latency in Edge Optical Networks, vol. 11, no. 4, pp. B10-B25, Apr. 2019

- A. Ebrahimzadeh and M. Maier,

“Tactile Internet over FiWi enhanced LTE-A HetNets via Artificial Intelligence Embedded Multi-Access Edge-Computing,”

CRC Press, "5G-Enabled Internet of Things," to appear

- A. Ebrahimzadeh, M. Chowdhury, and M. Maier,

“The Tactile Internet over 5G FiWi Architectures,”

Wiley-IEEE Press, "Optical and Wireless Convergence for 5G Networks," pp. 197-223, Oct. 2019

- M. Maier, A. Ebrahimzadeh, and M. Chowdhury

“The Tactile Internet: Automation or Augmentation of the Human?,”

IEEE Access, vol. 6, pp. 41607-41618, July 2018

References

[1]

|

M. Patel et al., "Mobile-Edge Computing Introductory Technical White Paper," White Paper, Mobile-edge Computing (MEC) industry initiative, 2014.

|

[2]

|

M. Satyanarayanan et al., "The case for vm-based cloudlets in mobile computing," IEEE Pervasive Computing, vol. 8, no. 4, pp. 14-23, 2009.

|

[3]

|

] M. Satyanarayanan et al., “An open ecosystem for mobile-cloud convergence,” IEEE Communications Magazine, vol. 53, no. 3, pp. 63-70, 2015.

|

[4]

|

M. Maier and B. P. Rimal, "Invited paper: The audacity of fiber-wireless (FiWi) networks: revisited for clouds and cloudlets," China Communications, vol. 12, no. 8, pp. 33-45, 2015.

|

[5]

|

NokiaNeworks, "Intelligent base stations," White paper, 2014.

|

[6]

|

N. J. Gomes et al., "Fronthaul evolution: From CPRI to Ethernet," Optical Fiber Technology, vol. 26, part A, pp. 50-58, 2015.

|

[7]

|

M. Abadi et al., "TensorFlow: Large-scale machine learning on heterogeneous systems, 2015," www.tensorflow.org.

|

|